Image relighting is the task of simulating a light source change in an image. It is an inverse challenging problem that is usually solved by estimating the image geometry, the reflectance and the lighting. Usually, the results contain artifacts that makes the output look unrealistic.

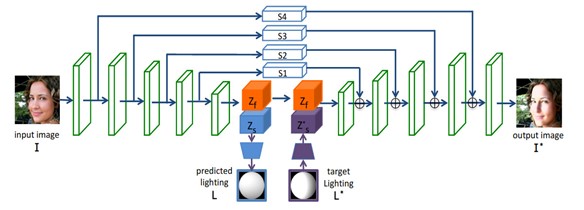

An interesting application for portrait relighting is proposed in [1]. They train an hourglass network on a dataset that they propose for this task, as illustrated in Fig. 1.

Fig. 1 Network structure as proposed in [1]

The network contains an encoder and a decoder, and four skip connection are introduced for connecting different scale features in the encoder with associated ones in the decoder.

It inputs a face image I and a target lighting L* and the encoder extracts lighting features Zs and face features Zf that should be illumination-independent. A regression network layer predicts a lighting model L from the lighting features Zs.

After predicting the target lighting features Zs* from the target lighting L*, they are concatenated with the face features Zf and given as input to the decoder.

The network outputs an image of the face lighted by the target L*.

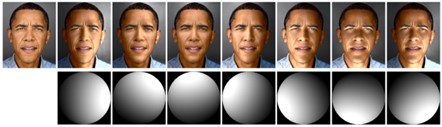

An image example is illustrated in Fig 2.

Fig. 2 Image example from [1], with a portrait image and a target lighting given as input to the network and generating a relit portrait.

Author: Gabriela Ghimpeteanu, Coronis Computing.

Bibliography

[1] H. Zhou, S. Hadap, K. Sunkavalli, and D.W. Jacobs, Deep single-image portrait relighting, in IEEE/CVF International Conference on Computer Vision (ICCV), October 2019, https://doi.org/10.1109/ICCV.2019.00729.